Double Dispatch - RTTI vs. Pure Vtable

As Jeremy Clarkson would say, I have been inundated with a request to performance test a dynamic_cast DD mechanism against the pure vtable one. The results surprised me, both in Debug and Release modes. So here is the dynamic_cast implementation:

void c_rtti::foobar(a_rtti & a2) {

c_rtti* c_ = dynamic_cast<c_rtti*>(&a2);

if (c_) {

::foobar(*this,*c_);

return;

}

b_rtti* b_ = dynamic_cast<b_rtti*>(&a2);

if (b_) {

::foobar(*b_, *this);

}

}I have used the most non-trivial case where the above function is called with a2 parameter pointing to an instance of b_rtti, thus triggerring two dynamic casts. I used the same technique on the vtable implementation for consistency, although it doesn’t matter as the sequence of execution is the same for any combination of objects. The test ran for 4 million iterations.

Here is the test code:

a& a_ = c();

b bInstance;

a& b_ = bInstance;

a_.foobar(b_);Just to show what actually happens in both implementations, here are the sequence diagrams.

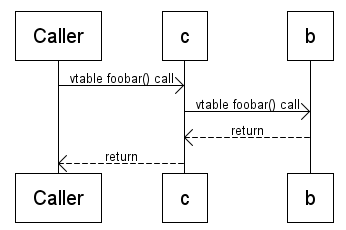

vtable:

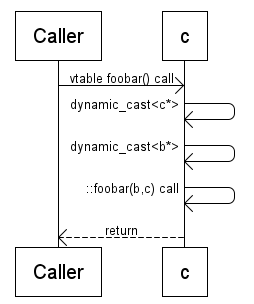

dynamic_cast:

The results

In debug mode, RTTI implementation is about 20% faster:

No RTTI - 1685 milleseconds.

With RTTI - 1295 milleseconds.(23.15% faster)

So this is pretty insignificant in a real life application and I doubt it would come in the profiler. By my calculation there is about 100 clock cycles (on a 1Ghz CPU) difference between the two per call.

In Release mode things get interesting:

No RTTI - 62 milleseconds.

With RTTI - 780 milleseconds.(-1158.06% faster)

The pure vtable version is ten times faster!!! Of course this again means very little in the real world. It’s worth noting that the RTTI verson is twice faster in Release mode. What is also interesting is that in Debug mode vtable is slower by 100 clock cycles, in release it’s faster by 200.

To summarise. The real life value of this performance analysis is zero. If you are Google and you had 100 PhDs design the program and another 100 wrote the library routines for it, doing this optimization will improve the performance by about 0.000001%. In any other case, this is textbook case of premature optimisation.

blog comments powered by Disqus